AI Assurance Cases: Ship Safety-Critical Models With Proof

AI Risk & Safety

Nov 14, 2025

Safety is not a slide. It is a body of evidence. In defense, aerospace, and other safety-critical programs, leaders now ask a simple question: can we prove this model is fit for purpose, for these users, under these conditions. An assurance case answers that question with a structured argument that ties claims to verifiable proof. Done well, approvals move faster, audits close cleanly, and teams scale without stalling on risk.

What an AI assurance case is

Think of an assurance case as a living safety dossier. It makes a clear claim about a model’s fitness for a specific workflow, explains why that claim is credible, and links to the evidence. The pattern comes from aviation and medical devices. State the goal. Show the arguments. Attach the proof. For AI, the proof spans data suitability, training lineage, evaluation suites, red-team results, operational logs, and change records. It evolves with every release and every drift review.

One claim per high-risk workflow keeps the case readable. Example: “The identity-verification model is safe to operate at the stated threshold for the following populations and conditions.” Everything in the case supports or limits that claim.

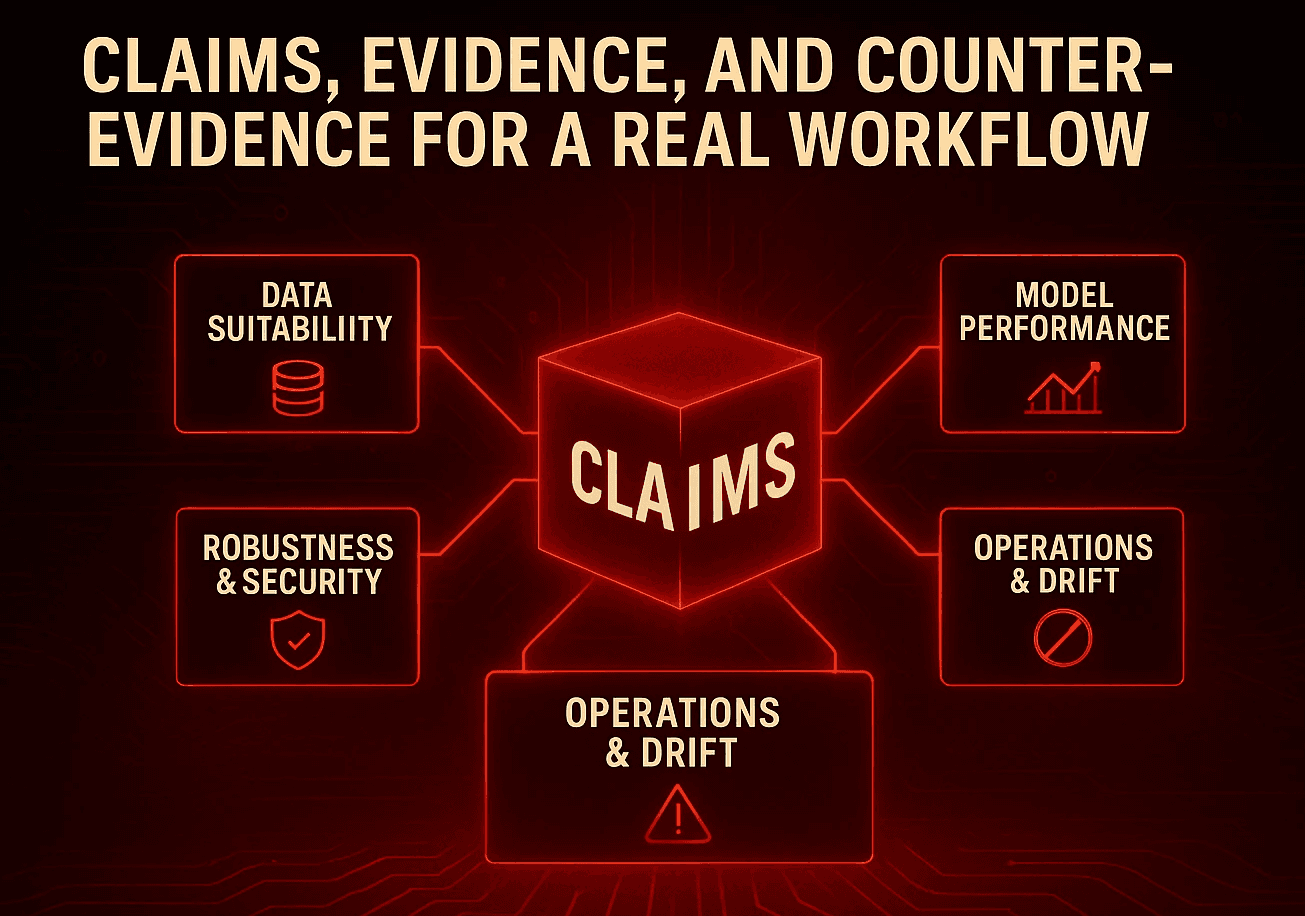

Claims, evidence, and counter-evidence for a real workflow

Structure the case so a board member can parse it in minutes and an auditor can verify it in depth.

Data suitability: Show the dataset sources, sampling, representativeness checks, and data quality screens. Include population coverage and known limits. Link to privacy controls and data handling approvals.

Model performance: Report precision, recall, false positives and false negatives by segment. Include stress tests for edge conditions and low-light scenarios if you operate in the physical world. Call out thresholds and operating points, not just averages.

Robustness and security: Attach adversarial testing and jailbreak attempts with mitigations. If an agent can act on tools, show scopes, budgets, and blocked action logs. Include misuse testing that simulates realistic operator error.

Operations and drift: Present live metrics, alert thresholds, and the last drift review. Show actions taken, not only charts. Include rollback capability and the mean time to mitigation for the last quarter.

Governance: List owners, approvals, sign-offs, and access controls. Link to change tickets. Prove separation of duties between builders and approvers.

Counter-evidence: A strong case includes limits. Document failure modes, populations with reduced accuracy, and conditions where the model is not approved. State the escalation path when those limits are reached.

Building the evidence graph in your registry and SIEM

Proof should not live in slides or inboxes. Build a simple graph of signed artifacts and logs.

Registries: Register models, datasets, and evaluation suites with unique IDs, owners, and version history. Record lineage from data through training to deployment. Store signatures and attestations at build and promotion.

Event trails: Emit structured events for dataset approval, feature transformations, training jobs, promotion gates, inference calls, human overrides, and rollbacks. Route events to your SIEM with immutability controls and retention windows that match your regulatory posture.

Reviewer view: Expose a readable view that links the claim to the evidence. Keep it consumable by non-engineers. One page for the claim and arguments. One click to export the evidence packet.

Export on demand: Make the entire case exportable in under one minute. Include a manifest, signatures, and hashes so external assessors can verify integrity.

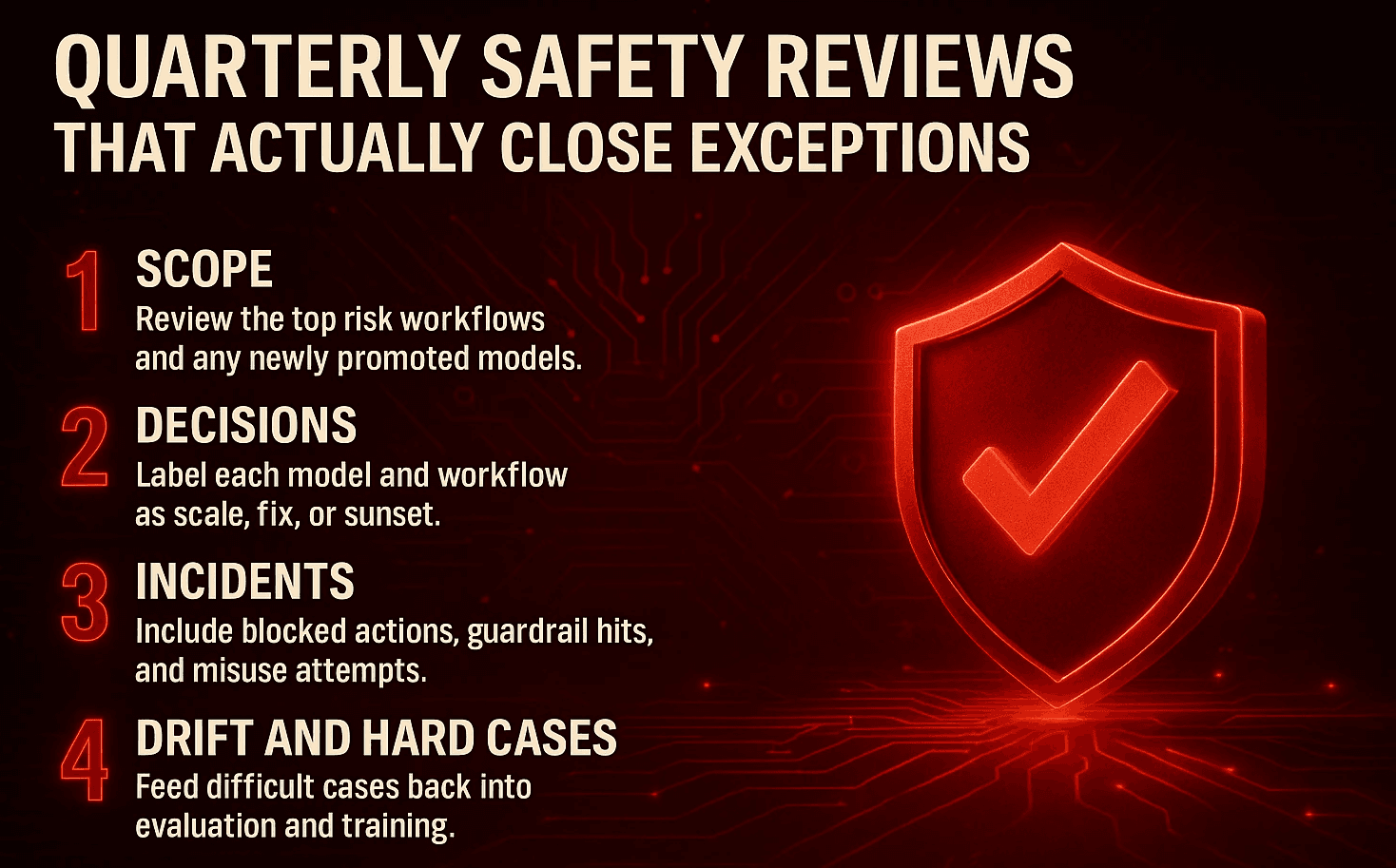

Quarterly safety reviews that actually close exceptions

Treat safety like business operations, not a special event.

Scope: Review the top risk workflows and any newly promoted models. Start with the assurance claim and walk the evidence.

Decisions: Label each model and workflow as scale, fix, or sunset. Record owners and deadlines for exceptions. Tie actions to change management so mitigations are visible and approved.

Signals: Bring incident data to the table. Include blocked actions, guardrail hits, confirmed misuse attempts, and time to contain. Align fixes with the threat model you run in production.

Drift and hard-case mining: Feed difficult cases back into evaluation and training. Update the case with new limits or improved performance. Close the loop every quarter.

KPIs boards can read at a glance

Measure outcomes that connect policy to proof.

Share of high-risk workflows with an approved assurance case

Mean time to assemble an exportable evidence packet

Open safety exceptions and mean time to mitigation

Drift events detected and resolved per quarter

Coverage of priority populations in evaluation suites

Percentage of releases with verified signatures and attestations

Incidents with complete evidence packets and time to contain

Implications for autonomous systems, robotics, and defense

Worlds that mix software with physics demand traceability. The same patterns apply whether your system steers a robot arm, pilots an uncrewed vehicle, or triages sensor feeds for a command center.

Sensor reality. Show how sensor shifts and environmental changes affect performance. Include test matrices for weather, lighting, vibration, and occlusion.

Mode switching. Document handover between autonomy and human control. Capture decision logs at the boundaries.

Mission risk. Tie model thresholds to mission risk tolerance. Use explicit go or no-go criteria rather than “it looked fine in testing.”

Certification. Bridge from AI evidence to established certification regimes. Use signed artifacts and traceable lineage to support conformity assessments.

Build choices that keep evidence consistent

You can succeed with open models, proprietary APIs, or a blend. What matters is custody and consistency.

Local custody. When you operate on-prem or in a VPC, you control data residency and log completeness. Evidence stays inside your perimeter.

Blended approach. If you call external APIs, wrap them in your custody layer. Enforce scopes and logging. Require the same IDs, signatures, and events as you do for local models.

Distillation where it counts. Distilled models can deliver 90%+ of the performance at lower cost with full transparency. Use them for stable, bounded tasks that need explainability and fast iteration.

A 12-week path to your first assurance case

Week 1–2: Pick one high-risk workflow. Define the claim. List evidence needed and gaps to close.

Week 3–4: Stand up a registry entry for the model and datasets. Assign owners. Record lineage and approvals.

Week 5–6: Wire training, evaluation, promotion, and inference events into your SIEM. Verify retention and integrity.

Week 7–8: Run a red-team exercise. Capture counter-evidence and mitigations. Add misuse tests to the evaluation suite.

Week 9–10: Build a reviewer view that links the claim to the evidence. Test the one-minute export.

Week 11–12: Hold the first safety review. Label the workflow scale, fix, or sunset. Publish the KPIs. Schedule the next drift review.

Actionable takeaways for leaders

Treat safety as evidence. Require a claim and a case for every high-risk workflow.

Make proof a by-product of normal work. Log what matters as it happens.

Standardize exportable packets for assessors. Do not rebuild evidence at audit time.

Run quarterly safety reviews that end in decisions. Scale the strong. Fix the weak. Sunset the risky.

Track KPIs that fit a board deck. Time to proof. Exceptions closed. Drift events resolved.

❓ Frequently Asked Questions (FAQs)

Q1. What exactly goes into an AI assurance case?

A1. A clear claim for one high-risk workflow, plus linked proof. Include data suitability checks, evaluation results by segment, red-team findings and fixes, signed build and promotion records, live drift and guardrail metrics, and the approvals and access controls. Keep it readable in five minutes with one-click export of the evidence packet

Q2. How do we know if our assurance program is working?

A2. Track board-level KPIs. Share of high-risk workflows with an approved case, mean time to assemble an exportable packet, open safety exceptions and mean time to mitigation, drift events detected and resolved per quarter, and percentage of releases with verified signatures. If those numbers improve quarter over quarter, the program is creating real control

Q3. Can we use external API models and still pass audits?

A3. Yes, if you keep custody and consistency. Wrap external models behind your gateway, enforce tool scopes and budgets, log prompts, responses, and tool calls with IDs and signatures, and store events in your SIEM. Use the same registry entries, lineage, and approvals you apply to local models so the assurance case looks identical to auditors